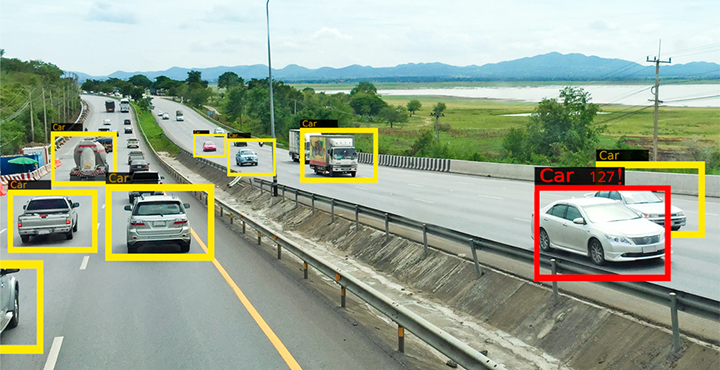

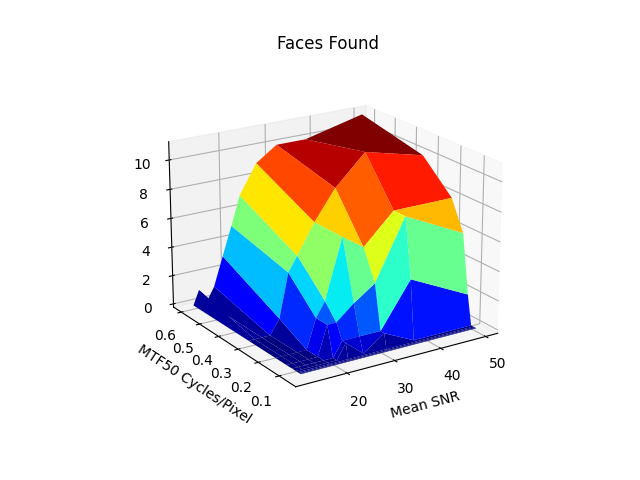

Validating Information Metrics Correlation with Object Detection

We are in the age of Artificial Intelligence that depends on machine vision. This technology surge has necessitated thinking about […]

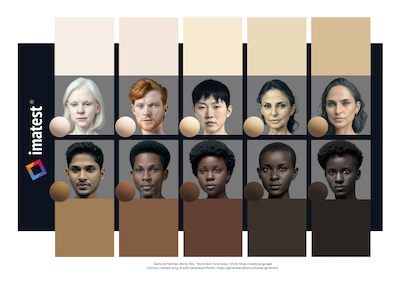

Improving Image Equity: Representing diverse skin tones in photographic test charts for digital camera characterization

Megan Borek; Imatest LLC; Boulder, CO, USA This paper was presented on 2025-02-05 at Electronic Imaging 2025 Abstract: Accurate representation […]

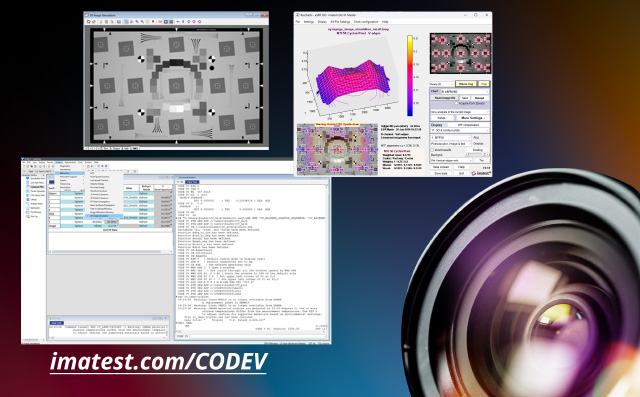

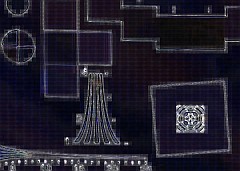

Using Imatest with CODE V 2D Image Simulation (IMS)

Imatest test charts raster files and can be used with 2D Image Simulation (IMS) to create images with the degradations […]

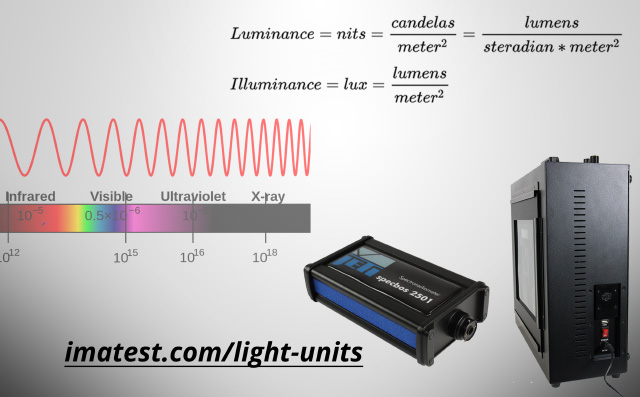

Photometry Luminance and Illuminance units vs. Radiometry Radiance and Irradiance units

This knowledge base post describes why lightboxes are traditionally described in photometric illuminance units of Lux, despite a more appropriate […]

Equity in Camera Technologies: How Consumer Cameras Perform Across Skin Tones

by Meg Borek We should be designing more equitable cameras. I recently tested a variety of webcam and smartphone devices […]

Imatest Electronic Imaging 2024 Recap

Have a virtual visit to our booth and read the papers we published to advanced imaging science.

Call for Participation in Perceptual Image Quality Research

The IEEE P1858 standard for Camera Phone Image Quality has produced two revisions of its Camera Phone Image Quality (CPIQ) […]

2022 Retrospective

Happy Holidays from Imatest. We hope you have a safe and enjoyable holiday season spent with friends and family. We want to thank you all for your continued support throughout 2022 and into 2023. Cheers to a new year filled with opportunity and advancements!

Take a look back at some of our notable product releases of this year:

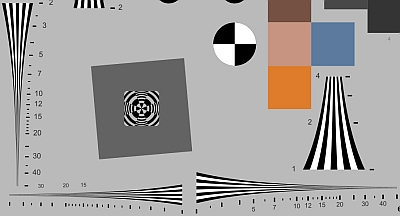

Imatest announces new Target Generator Library

We are happy to announce the release of the new Target Generator Library today. The Imatest Target Generator is free […]

Correlating the Performance of Computer Vision Algorithms with Objective Image Quality Metrics

by Henry Koren 1. Why care about the quality of your cameras? The task of computer vision (CV) involves analyzing […]

Imatest is Partnered with Edmund Optics

Imatest is proud to announce a new partnership with Edmund Optics. You can visit Edmund Optics Silicon Valley Solutions Center […]

Logarithmic wedges: a superior design

We introduce the logarithmic wedge pattern, which has several advantages over the widely-used hyperbolic wedges found in ISO 12233 (current […]

Using images of noise to estimate image processing behavior for image quality evaluation

In the 2021 Electronic Imaging conference (held virtually) we presented a paper that introduced the concept of the noise image, […]

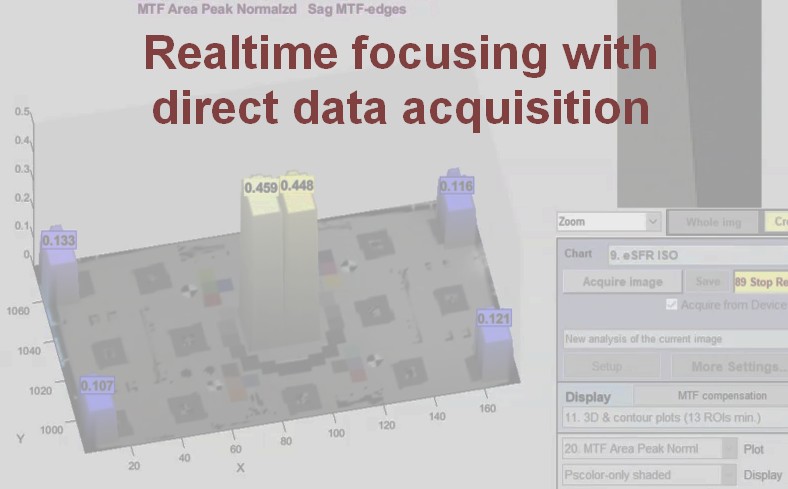

Real-time focusing with Imatest Master direct data acquisition

Speed up your testing with real-time focusing in Imatest Master 2020.2. Recent speed improvements allow for real-time focusing and allow […]

Image Quality Testing for Webcams

Webcams are an increasingly vital tool for working remotely and staying connected with friends and loved ones. As such, webcam […]

Correcting Misleading Image Quality Measurements

We discuss several common image quality measurements that are often misinterpreted, so that bad images are falsely interpreted as good, and we describe how to obtain valid measurements.

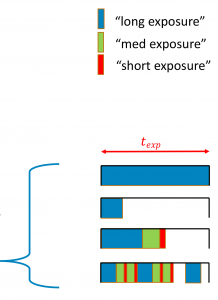

Describing and Sampling the LED Flicker Signal

High-frequency flickering light sources such as pulse-width modulated LEDs can cause image sensors to record incorrect levels. We describe a […]

Validation Methods for Geometric Camera Calibration

Camera-based advanced driver-assistance systems (ADAS) require the mapping from image coordinates into world coordinates to be known. The process of computing […]

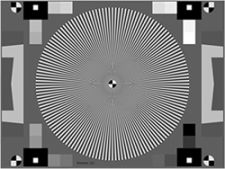

Measuring camera Shannon information capacity with a Siemens star image

Shannon information capacity, which can be expressed as bits per pixel or megabits per image, is an excellent figure of […]

Verification of Long-Range MTF Testing Through Intermediary Optics

Measuring the MTF of an imaging system at its operational working distance is useful for understanding the system’s use case […]