Year: 2022

Imatest at Electronic Imaging 2023

Imatest is excited to attend Electronic Imaging 2023 in San Francisco, California! For the first time in three years, the […]

Imatest Customer Profile: K. Tina Agnes Ruth

Mia What do you do for work? Tina I am an Imaging engineer at E-con Systems. At E-con Systems we […]

Imatest Customer Profile: Russell Bondi

Mia Could you go into detail about what you do for work? Russ Yes, I’m an image quality engineer at […]

Fixed versus Interactive modules

Imatest has two types of analysis module: Fixed (in the left-most column of the Imatest main window) and Interactive (in […]

Imatest releases new Version 22.2

11 October 2022, Boulder, CO. Imatest releases version 22.2 with new updates and features including Stray Light (Flare) Analysis, Auto […]

Imatest Customer Profile: Pawel Achtel, ACS

This month, we have a very special customer profile. We had the chance to chat with Pawel Achtel, a cinematographer […]

Imatest wins Best Validation Simulation Tool award at AutoSens Brussels 2022

Imatest is excited to announce we were awarded Best Validation Simulation Tool for our software and charts at AutoSens Brussels […]

Imatest Releases Stray Light (Flare) Analysis with Upcoming Version 22.2

September 13th, 2022, Brussels, Belgium, and Boulder, CO. Imatest announced at AutoSens Brussels the release of its new stray light […]

Imatest Customer Profile: Fabrizio Ghetti

Mia: What do you do for work? Fabrizio: My job is now video expert in Italian R&D department of Avaya. […]

Renew expired support through year-end and save 40%

Maintaining current support on your Imatest license provides access to all new version releases and priority technical assistance from our […]

Imatest Customer Profile: Naveen Koul

Mia: The first question is what do you do for work? Naveen: I’m an image quality engineer. I work mainly […]

Imatest Internal Photo Contest Winners

We are happy to showcase the winners of our internal photo contest. Judged by Nasim Mansurov of photographylife.com, our team […]

Imatest announces new Target Generator Library

We are happy to announce the release of the new Target Generator Library today. The Imatest Target Generator is free […]

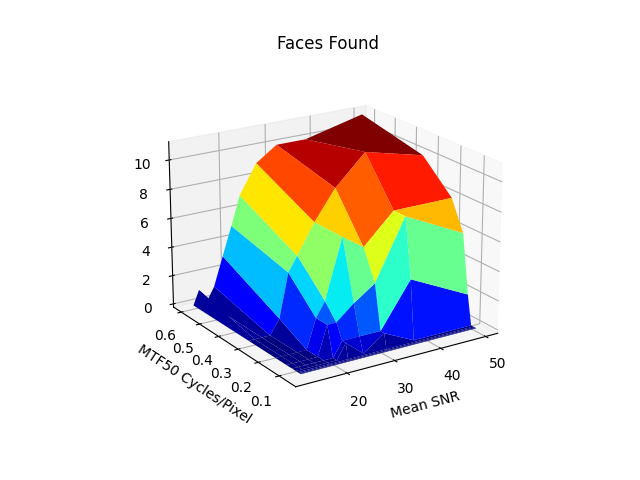

Correlating the Performance of Computer Vision Algorithms with Objective Image Quality Metrics

by Henry Koren 1. Why care about the quality of your cameras? The task of computer vision (CV) involves analyzing […]

Imatest is Partnered with Edmund Optics

Imatest is proud to announce a new partnership with Edmund Optics. You can visit Edmund Optics Silicon Valley Solutions Center […]

Detecting traffic lights with RCCB sensors

RCCB (Red-Clear-Clear-Blue) sensors are widely used in the automotive industry because their sensitivity and Signal-to-Noise Ratio (SNR) is better than […]

Imatest Customer Profile – Dr. Brian Deegan

Imatest is happy to introduce customer profiles! Each month, we will interview one of our Imatest users to share why […]

Imatest Releases Version 22.1

Imatest Version 22.1 introduces new features including Automatic Chart Identification, Internationalization, ISO 12233 Standards Support, Sagittal/Tangential MTF Plot Updates, and […]

Imatest partners with SphereOptics

Imatest is proud to announce our new authorized reseller with SphereOptics. Based in Germany, SphereOptics is a major supplier of technical equipment for […]

Scene-referenced noise and SNR for Dynamic Range measurements

The problem — in the post on Dynamic Range (DR), DR is defined as the range of exposure, i.e., scene […]