Overview and introduction to measurements

Overview and Imatest measurements

Image quality is one of those concepts that’s greater than the sum of its parts. But you can’t ignore the parts if your goal is to produce images of the highest quality. Every image quality factor counts.

This page introduces the key image quality factors and briefly describes how Imatest™ measures them— with links to detailed pages. It is a guide to Imatest organized by image quality factors. Other guides include the Imatest documentation (the Table of Contents).

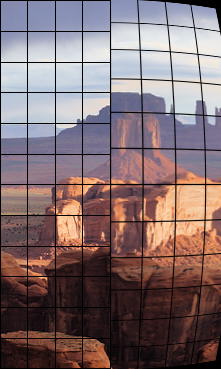

To illustrate the quality factors, we use this early morning image of Monument Valley from Hunt’s Mesa, near the Arizona-Utah border.

Image quality measurements are affected by the

- Lens — Imatest cannot measure lenses by themselves, but lenses can be effectively compared to one another using a single camera body with consistent image processing settings.

- Sensor — Imatest can measure the performance of the Lens+sensor from minimally-processed RAW images if they are available. Sharpness, distortion, vignetting, Lateral Chromatic Aberration, noise, and dynamic range are the principal factors that can be measured at this stage. Most of these measurements can be clearly classified as good/bad.

- Image processing pipeline— typically includes demosaicing, color correction, white balance, application of gamma and tonal response curves, sharpening, and noise reduction— all applied to JPEG images from cameras. Many of these functions can be simulated with the Image Processing module. The output of the pipeline may be compared to the minimally-processed images from converted RAW images. The effect of the pipeline on subjective image quality can be highly scene- and application-dependent, making it difficult to assign “good” or “bad” rankings. Imatest results for these factors need to be interpreted carefully. Examples:

- Higher contrast images often score higher in visual quality assessment tests, but high contrast can cause clipping (visible as burnt-out highlights) in contrasty (often sunlit) scenes. Tonal response curves with “shoulders” can help. See Color/Tone Interactive, Color/Tone Auto, and Stepchart.

- Most consumers find accurate color to be boring, so camera manufacturers “enhance” colors in a number of ways, such as boosting chroma. See Color/Tone Interactive, Color/Tone Auto, and Colorcheck.

- Most consumers dislike noisy images, but software noise reduction (typically lowpass filtering in areas that lack contrasty features) can remove fine texture, resulting in a cartoon-like “plastic” skin appearance. See Log F-Contrast and Random/Dead Leaves.

Summary table

This table summarizes the Image Quality Factors (also called Key Performance Indicators; KPIs) described in detail below. Most of the charts are available from Imatest Store.

| Quality factor (KPI) |

Chart | Module | Comments |

|

Camera, lens

|

|||

| Blemishes, Sensor defects | Plain, uniformly-illuminated surface (flat field) |

Blemish | Can be displayed on flat screen monitor with Screen Patterns and opal diffusing glass. Note [1] |

| Color accuracy | X-Rite ColorChecker (24-patch) | Color/Tone Interactive, Color/Tone Auto, Colorcheck | |

| IT8.7 | Color/Tone Interactive, Color/Tone | ||

| ColorChecker SG, general mxn grids, and many other charts | Color/Tone Interactive, Color/Tone Auto | Note [1] | |

| SFRplus and eSFR ISO multi-purpose charts | SFRplus, eSFR ISO | Best when used with a L*a*b* reference file | |

| Dynamic range (DR), | Grayscale step charts (linear and Multi-row; primarily transmissive), including the Imatest 36-Patch Dynamic Range chart | Color/Tone Interactive, Color/Tone Auto, Stepchart | Transmission charts are recommended for DR. Algorithm. Many are available from the Imatest Store. |

| Reflective step charts (also works with transmissive charts) | Dynamic Range | Postprocessor for Stepchart and Color/Tone. Calculates DR by combining several analyses at different exposures. Doesn’t require a transmissive chart. | |

| ISO 12233:2014 E-SFR | eSFR ISO | Measures ISO 15739 DR. Highly automated. Measures several IQ factors. Available from the Imatest Store. | |

| Exposure accuracy | Step charts (reflective) | Color/Tone Interactive, Color/Tone, Stepchart |

|

| X-Rite ColorChecker | Color/Tone Interactive, Color/Tone Auto, Colorcheck |

||

| Information capacity |

All slanted-edge charts |

Star SFR, SFRplus, eSFR ISO, SFRreg, Checkerboard |

Excellent figure of merit for cameras. Combines sharpness, noise, and artifacts. Measures signal and noise at same location. See Shannon information capacity. |

| ISO Sensitivity (closely related to Exposure Index) |

Step charts | Stepchart | Two ISO sensitivity measurements are displayed when incident light (lux) is entered. Details in ISO Sensitivity and Exposure Index |

| Various color and step charts | Color/Tone Interactive, Color/Tone Auto | ||

| SFRplus | SFRplus | ||

| X-Rite ColorChecker | Color/Tone Interactive, Color/Tone Auto, Colorcheck | ||

| Lateral chromatic aberration | Slanted edge, ISO 12233 charts | SFR | Available from the Imatest Store. Note [2] |

| SFRplus, ISO 12233:2014 E-SFR, Checkerboard |

SFRplus, eSFR ISO, SFRreg, Checkerboard | Available from the Imatest Store. Note [2] | |

| Dot pattern | Dot Pattern | Available from the Imatest Store. Note [2] | |

| Lens distortion | Square or rectangular grid or checkerboard, | Distortion | Printable by Test Charts or displayed on LCD flat screen monitor with Screen Patterns. |

| SFRplus, ISO 12233:2014 E-SFR | SFRplus, eSFR ISO, Checkerboard | Highly automated. Measures several factors. Results in the Image & Geometry display. | |

| Dot pattern | Dot Pattern | Available from Imatest Store. | |

| Light falloff, vignetting | Plain, uniformly-illuminated surface | Light Falloff | Can be displayed on flat screen monitor with Screen Patterns. Opal diffusing glass recommended. |

| Noise | Step charts | Stepchart | |

| X-Rite ColorChecker | Color/Tone Interactive, Color/Tone Auto, Colorcheck | ||

| SFRplus | SFRplus | Measures flat areas near slanted-edges. Best with low (4:1) contrast charts. | |

| eSFR ISO | eSFR ISO | Measures noise from grayscale surrounding center of chart. See Color/Tone Interactive/Color/Tone/eSFR ISO Noise. | |

| Wide variety of grayscale and color charts | Color/Tone Interactive | Works with a large variety of grayscale and color charts if patches are large enough. Can measure sensor (raw) noise. See Color/Tone Interactive/Color/Tone/eSFR ISO Noise. | |

| Sharpness (MTF) | Slanted-edge, ISO 12233 | SFR, Rescharts |

ISO 12233:2000 and 2014 charts available from Imatest Store. Edges printable by Test Charts. Algorithm |

| SFR, SFRplus, eSFR ISO, SFRreg, Checkerboard | SFRplus, eSFR ISO, SFRreg, Checkerboard | Highly automated. Measures several factors. Available from Imatest Store. | |

| Other charts: Star, Spilled Coins, Wedge, etc. | Rescharts, Star, Log F-C, Random, Wedge… | Both interactive and fixed modules. Each responds differently to signal processing. | |

| Texture detail | Log F-Contrast, Spilled Coins (Dead Leaves) | Log F-Contrast Random/Dead Leaves |

|

| Tonal response (contrast) | Grayscale step charts (reflective and transmissive) | Color/Tone Interactive, Color/Tone Auto, Stepchart | Includes a huge variety of charts. Color/Tone Interactive and Color/Tone are very versatile: recommended. |

| ColorChecker, ColorChecker SG, IT8.7, Step Charts | Color/Tone Interactive, Color/Tone Auto | Color charts with gray scales for measuring tones | |

| Multi-purpose charts: ISO 12233:2014 E-SFR, SFRplus | eSFR ISO, SFRplus | Measure several image quality factors along with tonal response. | |

| Veiling glare (lens flare) | Reflective Q-13 or Q-14 step chart or plain white chart with “black hole” ISO 18844 |

Stepchart, Flatfield (ISO 18844 measurement) | See Veiling Glare. Note [1] |

| Color moiré (aliasing) |

Wedges in ISO 12233:2000 and eSFR ISO charts | eSFR ISO, Wedge | A color aliasing metric, derived from color moiré in wedges, has been added to Imatest 5.2. Color moiré is visible (but not measurable) in Log F-Contrast. |

| Software artifacts | Log F-Contrast Any arbitrary image |

Log F-Contrast SSIM |

Notes [1,2] Can be simulated with Image Processing. SSIM measures degradation due to image processing (primarily compression) by comparing two images. |

|

Prints (These functions work better with Gamutvision. Deprecated in Imatest.)

|

|||

| Dmax (deepest black tone) | Custom test chart printed from file, scanned on profiled flatbed scanner | Print Test | Gamutvision extracts these properties from ICC profiles. |

| Color gamut | |||

Notes: [1] Not available in Imatest Studio. Available in Master. [2] can be printed from Test charts, but we recommend purchasing it from the Imatest Store.

Image Quality Factors (KPIs) for cameras and lenses

Image Quality Factors are also called Key Performance Indicators (KPIs).

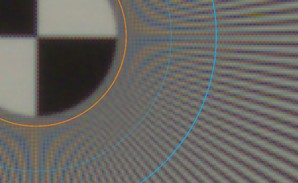

Sharpness

Sharpness is arguably the most important single image quality factor: it determines the amount of detail an image can convey. The image on the upper right illustrates the effects of reduced sharpness (from running Image Processing with one of the Gaussian filters set to 0.7 sigma). Device or system sharpness is measured as a Spatial Frequency Response (SFR), also called Modulation Transfer Function (MTF). MTF is the contrast at a given spatial frequency (measured in cycles or line pairs per distance) relative to low frequencies. The 50% MTF frequency correlates well with perceived sharpness— much better than the old vanishing resolution measurement, which indicated where the detail wasn’t. Sharpness and MTF are introduced in Sharpness: What is it and how is it measured? The perceived sharpness of a print or display is measured by Subjective Quality Factor (SQF) or Acutance, which are derived from MTF, the Contrast Sensitivity Function of the human eye, and viewing conditions. Imatest‘s primary sharpness measurement uses slanted-edge patterns analyzed by SFR, Slanted-edge SFR (a part of Rescharts), SFRplus, eSFR ISO , SFRreg, or Checkerboard (the latter four are highly-automated), using targets you can purchase or print with the Imatest Test Charts module. Concise instructions are found in How to test lenses with Imatest. Several alternative patterns, which cause cameras to apply differing amounts of sharpening and noise reduction, can be used for measuring MTF. All require more real estate than the slanted-edge. They include

The MTF Measurement Matrix compares the different methods. |

Original | Blurred  Original | Oversharpened  |

|

|

System sharpness is affected by the lens (design and manufacturing quality, position in the image field, aperture, and (for zoom lenses) focal length), sensor (pixel count and anti-aliasing filter), and signal processing (especially sharpening and noise reduction). In the field, sharpness is affected by camera shake (a good tripod can be helpful), focus accuracy, and atmospheric disturbances (thermal effects and aerosols). Some lost sharpness can be restored by sharpening, but sharpening has limits. It can’t restore detail where MTF is very low (under about 10%). Oversharpening, illustrated on the right, can also degrade image quality (especially at large magnifications) by causing “halos” to appear near contrast boundaries. Images from many compact digital cameras and phones are oversharpened. Texture detail |

||

Many consumer cameras, especially cameras with small image sensors or pixels (mobile imaging devices and point-and-shoots), have signal processing that varies over the image plane. Sharpening is applied near contrasty features (like edges), but noise reduction (lowpass filtering) is applied— often strongly— the absence of sharp features, resulting in loss of texture detail. Such cameras will perform well on slanted-edge tests while producing unsatisfactory images. To emphasize this we show a real camera phone image, where the window and shingles have been strongly sharpened, but texture in the pine shrubs has been completely lost. Imatest measures texture sharpness in two modules: Imatest measures texture sharpness in two modules:

|

Original | Processed |

|

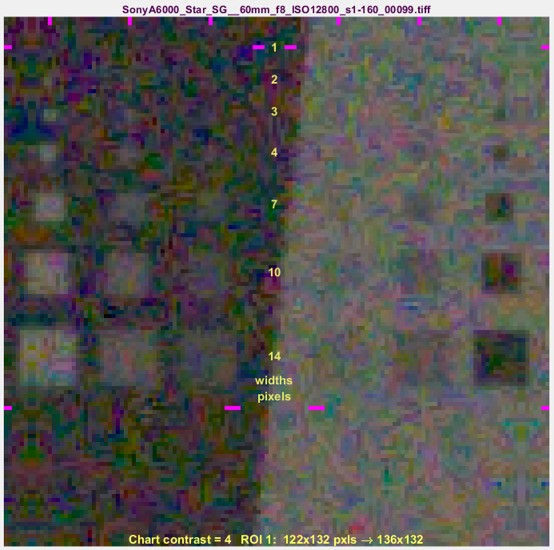

Noise Noise is a random variation of image density, visible as grain in film and pixel level variations in digital images. It arises from the effects of basic physics— the photon nature of light and the thermal energy of heat— inside image sensors and amplifiers. Noise and its measurement are introduced in Noise in photographic images and described in more depth in Color/Tone & eSFR ISO Noise. Noise is measured by several Imatest modules. Color/Tone Interactive, Color/Tone Auto, and Stepchart produce the most detailed results, but noise is also measured in Colorcheck, SFR, SFRplus, eSFR ISO, and Flatfield. ISO 15739 Visual Noise estimates the visual strength of noise using the noise spectrum, a model of the human visual system, and viewing conditions. Noise scales strongly with pixel size. It can be very low in digital SLRs, which have pixels at least 4 microns square. But it can get ugly in compact digital cameras and camera phones with tiny sensors, especially at high ISO speeds or in dim light. It is also affected by sensor technology and manufacturing quality. |

Original | Noise added  |

|

|

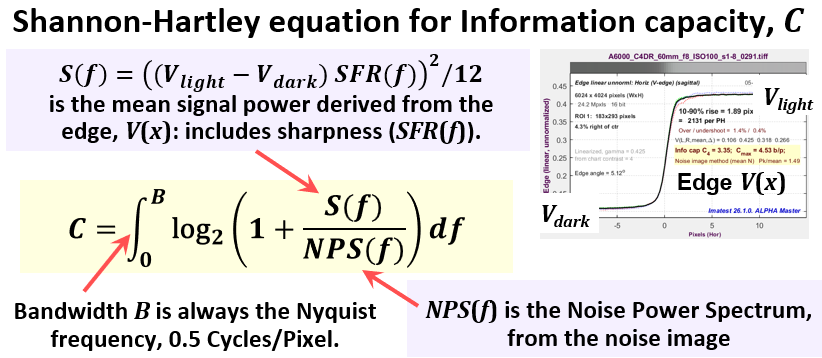

Software noise reduction (NR) reduces the visibility of noise by smoothing the image, excluding areas near contrasty boundaries. This technique works well, but it can obscure fine, low contrast detail. The Log Frequency-Contrast module clearly measures the effects of software noise reduction. The Random/Dead Leaves module uses a pattern that tends to maximize noise reduction (it’s the worst-case for noise reduction)— in contrast to the slanted-edge (the opposite limiting case), which tends to maximize sharpening and minimize noise reduction. Information capacity and metrics

The white paper, “Camera information capacity: a key performance indicator for Machine Vision and Artificial Intelligence systems“, briefly introduces information theory, describes the camera information capacity measurement, then shows results (including the effects of artifacts). Information capacity can be easily measured from slanted edges: just set a dropdown menu to turn on the calculation. Measuring Camera Information Capacity with slanted-edges describes the new method in detail.

|

||

Color accuracy vs. appeal Original | Color-shifted

Image Quality Video Series: Color Accuracy Color accuracy is an important but ambiguous image quality factor. It can be critical in medical and technical photography, but less so in pictorial (consumer) photography, where many viewers prefer enhanced color saturation, particularly in “memory colors”: foliage, sky, and skin. Accurate color is not the same as “pleasing” color. Whatever the application, it is important to measure a camera’s color response: its color shifts, saturation, and white balance effectiveness. Color difference measurements are described in Wikipedia and in the Color/Tone and Colorcheck appendix. Color response is measured by Color/Tone Interactive and Color/Tone Auto using the 24-patch X-Rite ColorChecker, ColorChecker SG, IT8.7, and many other standard and custom charts. (It is also measured by Colorcheck, using the widely-available 24-patch X-Rite ColorChecker® chart-only.) These charts may be included in scenes for white balance testing. Color accuracy may be measured against standard chart reference values or CSV reference files that contain measured color values, which may be altered to reflect customer preferences. Measuring color patches is described here. Color accuracy is affected by the Bayer color filter array and by the signal processing and white balance algorithm in the camera or RAW converter. Flare light (veiling glare) in lenses tends to reduce color saturation. Color/Tone Interactive and Color/Tone Auto can calculate a color correction matrix. |

||

Lens (optical) distortion Lens (optical) distortion is an aberration that causes straight lines to curve near the edges of images. It can be troublesome for architectural photography and photogrammetry (measurements derived from images). The simplest approximation is the 3rd order equation, \( r_u = r_d + kr_d^3\) where ru is the undistorted and rd is the distorted radius. Depending on the sign of k, it can be either “barrel” (shown on the right) or “pincushion.” A mixture known as “mustache” distortion may occur for complex lenses when the coefficients of the 5th order approximation (\(r_u = h_1r_d^3 + h_2 r_d^5\)) have opposite signs. Distortion: methods and modules is a good introduction to distortion. It lists the mathematical models, modules used to measure distortion, and illustrates results. |

Original | Barrel-distorted  |

|

|

Lens distortion and coefficients for correcting it are calculated in the following modules: Distortion, Dot Pattern (CPIQ-compliant), SFRplus, Checkerboard (which measure distortion along with sharpness and several other factors), and eSFR ISO (limited detail). We currently recommend Checkerboard, which has the most versatile and accurate calculations. Distortion is worst in wide angle, telephoto, and zoom lenses. It often worse for close-up images than for images at a distance. It can be easily corrected in software. The Imatest Radial Geometry module, Picture Window Pro and PTLens have tools for removing it. Related standards: CPIQ Local Geometric Distortion – ISO 17850 — Geometric distortion (GD) measurements |

||

Light falloff (vignetting) and sensor nonuniformities Light falloff (vignetting) darkens images near the corners. It can be particularly strong with wide angle lenses. It is measured by Flatffield and Flatfield-Interactive (an interactive module designed to work with direct image acquisition). Light falloff often improves when lenses are stopped down. It can be easily corrected in software or in the image processing pipeline. Picture Window Pro, PTLens, and several other programs have tools for removing it. Because moderate amounts of light falloff can be pictorially pleasing, it’s not always advisable to remove it completely. Flatfield also measures other sensor nonuniformities, including color shading (nonuniformity), stuck (hot and dead) pixels, local sensitivity variations, spots (from dust), and noise. |

Original | Vignetted |

|

Blemishes (visible sensor defects)Blemishes are visible spots or marks in the image, caused by sensor defects or by dust in front of the sensor (typically separated by the Bayer, anti-aliasing, and infrared (IR) filters). They are extremely important in manufacturing. They are measured by Flatfield Blemish Detect (in Imatest Master). Blemish Detect filters the image using a transfer function that is similar to the Contrast Sensitivity Function of the Human Visual System. Because of this, when filter parameters are set up properly, visible blemishes will be flagged and blemishes beneath the threshold of visibility will be ignored. This can significantly improve manufacturing yields. Flatfield Blemish Detect also measures hot and dead pixels and can perform most Flatfield functions. |

Original | Blemishes |

|

Exposure accuracy and ISO Sensitivity Exposure accuracy is not much of a problem with manually-adjustable cameras where it can be easily determined (with the help of the histogram), and fixed using exposure compensation. But it can be an issue with fully automatic cameras and with video cameras that offer little opportunity for post-exposure tonal adjustment. Exposure accuracy can be measured by photographing a scene that includes any test chart with a grayscale pattern and analyzing it with Stepchart (for grayscale stepchart-only patterns such as the Q-13/Q-14), Colorcheck (for the X-Rite Colorchecker), Color/Tone Interactive or Color/Tone Auto (for any chart with a grayscale pattern). |

Original | Overexposed |

|

|

||

Lateral chromatic aberration Image Quality Video Series: Chromatic Aberration Lateral chromatic aberration (LCA), also called “color fringing” is a lens aberration that causes colors to focus at different distances from the image center. It is most visible near corners of images. It is explained in Chromatic aberration. LCA is measured by SFR, SFRplus, eSFR ISO , SFRreg, and Checkerboard using (mostly) tangential edges near the image boundaries. Dot Pattern uses a grid of dots to make an I3A CPIQ-compliant LCA measurement. LCA is worst with asymmetrical lenses, including ultrawides, true telephotos and zooms. It is strongly affected by demosaicing. It can be fully corrected in software prior to demosaicing, but only partially corrected afterwards. Picture Window Pro has a fairly effective transformation. In the future, information provided by Imatest (detailed LCA profiles) may improve the degree of correction. |

Original | Color-fringed |

|

Veiling glare (lens flare)Veiling glare is stray light in lenses and optical systems caused by reflections between lens elements and the inside barrel of the lens. It predicts the severity of lens flare— image fogging (loss of shadow detail and color) as well as “ghost” images— that can occur in the presence of bright light sources in or near the field of view. (It does not measure the ghosts in detail.) Veiling glare is measured by

Veiling glare can only be measured reliably with RAW images, preferably decoded with gamma = 1, because image processing (especially conversion to sRGB color space) can affect the “toe” region of the tonal response curve, which is critical to veiling glare measurements. Special care must be taken to distinguish pixel offsets (minimum pixel levels in sensor or camera outputs— built into some video/cinema color spaces such as Rec. 709) from flare light. Lens flare is a major factor in limiting practical camera Dynamic Range. The Contrast Resolution chart and module are especially valuable for measuring true Dynamic Range in the presence of flare. |

Original | Veiling glare |

|

Color moiré (aliasing)Color moiré is color banding that can appear in images that have high spatial frequency repetitive patterns, such as fabrics or picket fences. The example on the right is a detail of a shirt captured by the Canon Rebel XT with its sharp kit lens. The usual image wasn’t used because it doesn’t contain a repetitive pattern that illustrates color moiré. Color moiré is an artifact that results from aliasing (image energy above the Nyquist frequency) in image sensors that employ Bayer color filter arrays. It is explained in detail in Nyquist frequency, aliasing, and Color Moire. It is affected by lens sharpness, the sensor’s anti-aliasing (lowpass) filter (which softens the image), and demosaicing software. It tends to be worst with the sharpest lenses. Color moiré is measured from hyperbolic wedges using the eSFR ISO and Wedge modules. A new Color moiré metric has been added in Imatest 5.2: the maximum value of the mean of CIELAB Chroma \(C^* = \sqrt{a^{*2} + b^{*2}} \) measured inside the wedge. The effects of aliasing on image quality are measured in the Shannon information capacity calculation. |

Color moiré |

|

Focus, defocus, and distance effectsSeveral important phenomena appear when a lens is defocused or the distance to the object is changed without changing the lens focus. Measuring them requires several images acquired at different distances (recommended) or different focus settings (difficult to accomplish repeatably). Multiple images can be used to measure

To make these measurements you’ll need to acquire batches of SFRplus, eSFR ISO, or Checkerboard images and process them with the FocusField postprocessor. Here is an animated GIF of the results. |

Original | Defocused  |

|

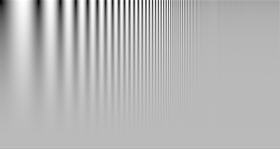

Image Processing artifacts: noise reduction and sharpeningSoftware (especially operations performed during RAW conversion) can cause significant visual artifacts, including oversharpening “halos” and loss of fine, low-contrast detail. These artifacts result from nonlinear (nonuniform) signal processing (so-called because it varies with the signal). Images may be sharpened (MTF boosted) in the proximity of contrasty features like edges and blurred (lowpass filtered) in their absence. This is most often done with Bilateral filtering, which can be simulated with the Image Processing module. This generally improve measured performance, but may result in a degradation of perceived image quality, for example, a “plasticy” cartoon-like appearance of skin even though edges are strongly sharpened.  This loss of detail can be measured by the Log F-Contrast module, which analyzes the chart shown on the left, which varies logarithmically in spatial frequency on the horizontal axis and in contrast on the vertical axis. The Random/Dead Leaves module is also useful for observing and measuring artifacts: a particularly egregious case is presented here. The effects of several types of artifact: aliasing (from demosaicing), data compression, and clipping (saturation) can be measured with Shannon information capacity using the Star module. |

Original | NR+Sharpening |

|

Data compression and transmission lossesData compression and transmission losses can have a significant effect on image quality. The right side of the image on the left has been saved as a low quality JPEG. Banding, loss of low-contrast detail, and “waviness” near edges are visible. Some of these losses, especially the loss of low-contrast detail, can be analyzed with the Log F-contrast and Random/Dead Leaves modules. The SSIM module provides a detailed measurement of compression/transmission losses using two images: a processed and a reference image. The images must be derived from the same capture and must have the same pixel size. Any arbitrary image can be used with this module, including images of several Imatest test charts, especially the two mentioned in the above paragraph. The Image Processing module also contains an SSIM calculation. The Information capacity measurement, which uses the Siemens star chart, also calculates the effects of data compression on image quality. It was tested by comparing JPEG with JPEG 2000. |

Original | Low-quality JPEG |

|

Quality factors for printersNote: Many of Print Test’s functions are now performed by Gamutvision. Print Test has been deprecated (nobody was using it.) Print DmaxDmax = -log10(minimum print reflectivity) is a measure of the deepest black tone a printer/ink/paper combination can reproduce. It is an extremely important print quality factor. Prints with poor Dmax look pale and weak. Dmax = 1.7 is a good value for matte prints; 2.0 is a good value for glossy, semigloss, and luster prints. There have been reports that Epson Ultrachrome K3 printers have Dmax as high as 2.3 with Premium Luster paper. That would be outstanding. Dmax is measured by Print Test, along with the Printer’s tonal response curve and the color factors described below. Print test requires a decent flatbed scanner, and for best accuracy, a Step chart. Dmax is affected by the printer, paper, and ink. The response curve is also affected by the ICC profile. Print Test can help with the selection of supplies and diagnosis of printing problems. |

Original | Reduced Dmax  |

Print color gamutPrint color gamut is the range of colors a printer/ink/paper combination can reproduce. It is an important quality factor, though its importance may be somewhat overrated. (This statement is bound to generate controversy.) Relatively unsaturated colors such as skin tones dominate our impression of print quality. Such colors must be reproduced accurately. Gamut affects only highly saturated colors. Overall color response, especially for low to moderately saturated colors, is more important than gamut. Print color gamut and overall color response are measured by Print Test, along with the density factors described above. Print gamut is affected by the printer, paper, ink, and working color space. Color response is also affected by the ICC profile and rendering intent. Print Test can help with the selection of profiles, software settings, and the diagnosis of color problems. |

Original | Reduced gamut |

Links

The Research Library Group (RLG) has some useful documents such as Guides to Quality in Visual Resource Imaging (2000). These articles are the predecessors to the above-mentioned RIT Direct Digital Image Capture work.

The University of Texas Laboratory for Image & Video Engineering is doing some interesting work on image and video quality assessment, which approaches the problem using information theory, natural scene statistics, wavelets, etc. Challenging material!